5G user equipment receiver performance is critical for high-quality connectivity and maximum data throughput. Block-error rate provides an effective metric in demodulation and determining receiver sensitivity.

Mobile device users expect to seamlessly stream videos, quickly access web pages, download data, and never experience a dropped voice call. Downlink throughout has a direct impact on all of these expectations. A wireless device’s transmitter and receiver performance significantly impacts these abilities. Receiver performance dictates the maximum data throughput of a user equipment (UE).

Receiver sensitivity is a key measurement that engineers can perform to characterize a receiver’s performance. Sensitivity provides the ability to effectively demodulate data in the poorest of radio conditions. Per 3GPP specifications, the reference sensitivity of a user equipment (UE) is defined as the minimum receive power level required to achieve a throughput rate ≥95% of the maximum possible throughput of a given reference measurement channel.

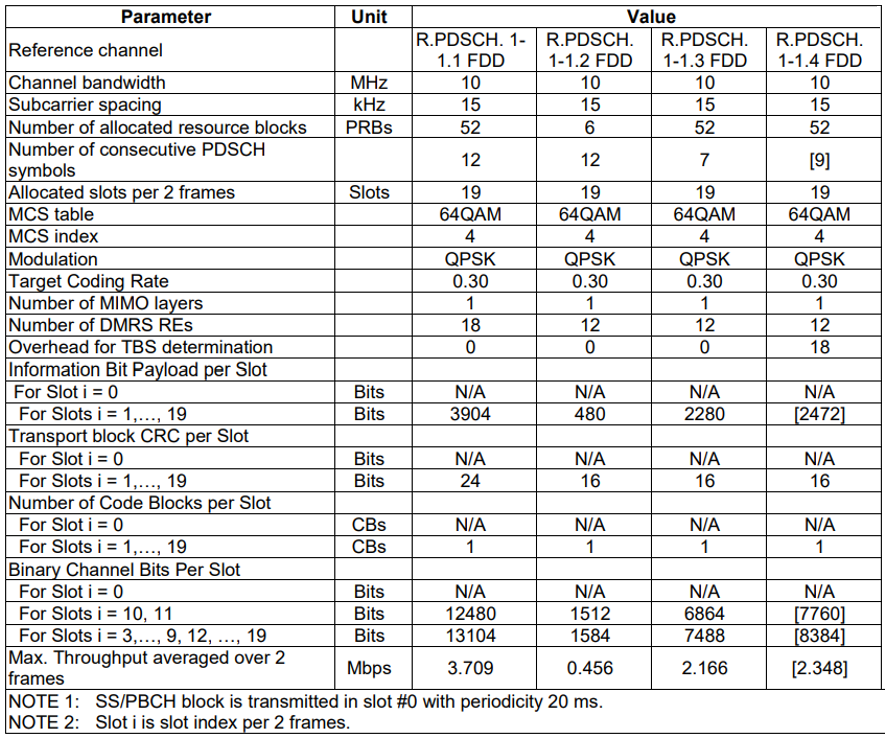

Table 1 shows that for a certain bandwidth and sub-carrier spacing (SCS), the reference channels are composed of a specific modulation scheme and coding rate (MCS index) over a given number of allocated resource blocks. The table also specifies the maximum throughput per code block averaged over a few sub-frames or frames. These downlink fixed reference channels (FRC) waveform configurations are defined in the 3GPP specifications 38.101 and 38.521 and are used for UE input testing.

Table 1. 3GPP 38.521, Annex A.3.2.1.1, reference measurement channels for physical downlink shared channel (PDSCH) performance for SCS 15 kHz, FR1, QPSK modulation.

A higher MCS depth yields higher spectral efficiency translating into higher data throughput. The MCS depth selection depends on two interrelated factors: radio signal quality and block error rate (BLER).

Signal-to-noise ratio (SNR), the difference between the received signal power and the noise power, represents radio quality. Poor radio conditions or a signal closer to the noise floor can lead to data corruption resulting in retransmissions between the transmitter and the receiver. Those retransmissions cause degradation in physical layer throughput and prolong transmission latency in communication. Thus, a higher MCS translates into more data bits packed per resource element (RE), which requires clean channel or higher SNR radio conditions. The relationship between MCS and SNR is not as direct as it appears. A third element that adds to the equation is the error rate. The error rate must be under a certain threshold for effective demodulation and to maintain the communication link between the next generation node B (gNodeB) and the UE.

What is BLER?

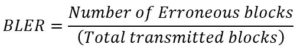

3GPP defines a specific physical-layer error estimation technique called block error rate (BLER) — the ratio of the number of transport blocks received in error to the total number of blocks transmitted over a certain number of frames. This measurement is one of the simplest metrics used to measure the physical layer performance of a device and is performed after channel de-interleaving and decoding by evaluating the cyclic redundancy check (CRC) on each transport block received.

BLER closely reflects on the RF channel conditions and the level of interference. For a given modulation depth, the cleaner the radio channel or higher the SNR, the less likely the transport block being received in error. That indicates a lower BLER. The inverse also stands true, where for a given SNR, the higher the modulation depth, the higher the possibility of error due to interference, thereby magnifying the BLER. Bearing that in mind, BLER proves to be one of the key measures of:

- Receiver sensitivity

- Download throughput

- In-sync and out-of-sync indication during radio link monitoring (RLM)

Reference sensitivity: key for cell edge users

The distance between the UE and base station dictates how well a device can receive a signal, thus making reference sensitivity even more important for devices at the edge of the cell’s transmission distance.

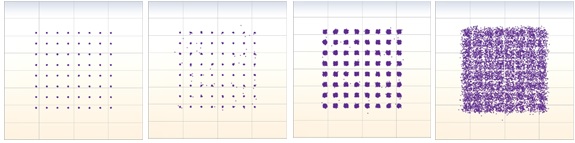

Once you understand the relationship between BLER and SNR, you can see how the measurement can prove to be one of the elemental indicators of call failures, call drops, ping-pong handovers, poor reliability, and higher latency at the cell edge or significantly far from the gNodeB. As an example, assume an average SNR of 20 dB, at which the MCS scheme of QPSK may be more than acceptable, but any incremental increase in MCS could lead to a decrease in receiver sensitivity and increased latency. Figure 1 shows how decreased MCS (left to right) results in signal degradation.

Figure 1. As the MCS gets denser (left to right), the probability of error increases, making demodulation harder at the receiver, thus decreasing receiver sensitivity.

Amongst the target use models of 5G, ultra-reliable low latency (URLLC) applications that are highly sensitive to latency must target a BLER between 10-9 to 10-5 for latency <1 msec vs. a typical value of 10-2 in LTE.

Throughput as a function of BLER

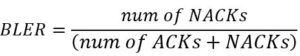

When performing receiver measurement,for every block of data payload received, the UE will send an acknowledgment (ACK) for blocks successfully decoded and send a negative acknowledgment (NACK) for blocks failing CRC. Put simply, BLER is the ratio of number of blocks failing CRC to the total number of blocks transmitted.

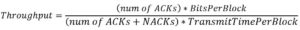

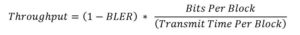

Throughput, on the other hand, is more than just the ratio of failing versus passing data blocks, it is a metric to measure the actual data bits successfully received in a certain duration, which can be described mathematically as:

In essence, throughput can be described in terms of BLER as:

Thus, as BLER decreases, the throughput increases.

At the physical layer in 5G and LTE, typical BLER threshold defined is to be ≤10%. To maintain the BLER under this threshold, gNodeB’s use a link-adaptation algorithm based on the UE’s feedback to signal a lower MCS and coding scheme. Doing so increases the redundancy and reduces the spectral efficiency for reliable data transfer.

Radio link monitoring (RLM)

Consecutive out-of-sync indications can cause the radio link to fail resulting in call drops. Hence for radio link failure detection and timely re-establishment, the UE actively performs radio-link monitoring (RLM) on both the primary and secondary cells and uses BLER as a metric to indicate out-of-sync/in-sync status to higher layers.

To evaluate the downlink radio link quality, the UE monitors the reference signals configured for radio link monitoring also referred to as RLM-RS. The network can configure either Synchronization Signal Block (SSB) or Channel State Information Reference Signal (CSI-RS) or a combination of both SSB’s and CSI-RS’s as RLM-RS resources to determine if it is able to reliably decode the hypothetical physical downlink control channel (PDCCH) transmission.

During RLM, the the UE assesses radio-link quality against the QOUT and QIN threshold configured by rlmInSyncOutOfSyncThreshold. QOUT is the level at which the downlink radio link cannot be reliably received, and QIN value is the level at which the downlink radio link quality can be significantly more reliably received than at Qout.

By counting the number of successful and unsuccessful decoding attempts for both QOUT and QIN, the UE estimates the radio link quality by determining the BLERIN and BLEROUT (see Table 2) and signals an out-of-sync and in-sync indication to the higher layers.

As per 3GPP, a BLER above 10% for a pre-specified duration causes a radio-link failure and BLER ≥2% could prevent the connection release.

Conclusion

Although there are several other parameters that dictate the performance of a device, BLER proves to be a fundamental receiver measurement that you can use as a basic indicator in determining a device’s the call quality, throughput, and sensitivity. The simplicity of this error-estimation technique makes it significantly useful not just for R&D but for manufacturing and field test.

Khushboo Kalyani is an experienced telecommunications professional with experience in 5G and LTE cellular technologies. She is currently a product manager at LitePoint focused on 5G test systems. Prior to joining LitePoint, she worked as an LTE modem test engineer at Qualcomm for over 6 years. Khushboo holds a MS degree in Telecommunications from University of Maryland at College Park.

Khushboo Kalyani is an experienced telecommunications professional with experience in 5G and LTE cellular technologies. She is currently a product manager at LitePoint focused on 5G test systems. Prior to joining LitePoint, she worked as an LTE modem test engineer at Qualcomm for over 6 years. Khushboo holds a MS degree in Telecommunications from University of Maryland at College Park.

Hello Dear Khushboo, have you known about CMW500, you have worked on it.

Kamran Haider

Production/Technical In-Charge

4G Mobile Industry.

5 G receiver complexity reduction any performance analysis – can you suggestion

database