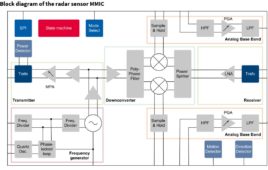

A revolutionary piece of technology, created by researchers at the University of St Andrews, can detect what an object is by placing it on a small radar sensor.

The device, called RadarCat (Radar Categorisation for Input and Interaction), can be trained to recognise different objects and materials, from a drinking glass to a computer keyboard, and can even identify individual body parts.

The system, which employs a radar signal, has a range of potential applications, from helping blind people identify the different contents of two identical bottles, to automatic drinks refills in restaurants, replacing bar codes at checkout, automatic waste-sorting or even foreign language learning.

Designed by computer scientists at the St Andrews Computer Human Interaction (SACHI) research group, the sensor was originally provided by Google ATAP (Advanced Technology and Projects) as part of their Project Soli alpha developer kit program. The radar-based sensor was developed to sense micro and subtle motion of human fingers, but the team at St Andrews discovered it could be used for much more.

Professor Aaron Quigley, Chair of Human Computer Interaction at the University, explained, “The Soli miniature radar opens up a wide-range of new forms of touchless interaction. Once Soli is deployed in products, our RadarCat solution can revolutionise how people interact with a computer, using everyday objects that can be found in the office or home, for new applications and novel types of interaction.”

The system could be used in conjunction with a mobile phone, for example it could be trained to open a recipe app when you hold a phone to your stomach, or change its settings when operating with a gloved hand.

A team of undergraduates and postgraduate students at the University’s School of Computer Science was selected to show the project to Google in Mountain View in the United States earlier this year. A snippet of the video was also shown on stage during the Google’s annual conference (I/O).

Professor Quigley continued, “Our future work will explore object and wearable interaction, new features and fewer sample points to explore the limits of object discrimination.

“Beyond human computer interaction, we can also envisage a wide range of potential applications ranging from navigation and world knowledge to industrial or laboratory process control.”