Despite the intense hype, 5G looks like a stepping stone to the real thing.

The 5G hype is so intense that it’s crushing us. While we have yet to see if 5G can deliver on its promises, the research community is already exploring what 6G might entail. On October 20 and 21, Northeastern University Institute for the Wireless Internet of Things and Interdigital hosted a virtual 6G Symposium, bringing together people from academia, industry, and government to discuss the limitations of 5G and what the next generation of wireless and wired networks must do. Listening to the speakers describe 5G’s limitations in terms of data rates and latency puts the hype in perspective.

Of the several key takeaways from the 6G Symposium, two stand out: AL/ML and spectrum. The two are related. Indeed, AI/ML cuts across just about every symposium topic.

While the specifications and engineering behind 5G were based on use cases — something that didn’t occur in previous generations — use cases will likely drive 6G development even more than they did for 5G. With 6G, we could see holographic images (Figure 1) transmitted over networks and air interfaces. Sensing of location with centimeter of better resolution could also factor into 6G development. We could also see ad hoc networks using drones “6G will provide wireless services, not just communications,” said Prof. Walid Saad of Virginia Tech. “We will stop thinking of smartphones and start looking and humans and machines communicating with surfaces.”

To get to the point where the person is part of the network, data rates must go beyond those available in 5G. Afif Osseiran, vice-chairman of the board at 5G-ACIA for Ericsson said that data rates must increase by 10x to 100x over 5G. That means at least 10 Gbps is needed before augmented reality/virtual reality (AR/VR) will achieve expected user experiences. What’s driving these experiences? Gaming, of course, but new uses will emerge just as they did with 4G and will do with 5G. It’s inevitable.

More data, less time

Not only must data rates increase, but the 1 ms latency that we hear about with 5G will seem like an eternity in 2030. “5G will fail on latency” said Prof. Danijela Cabric of UCLA. “We need latency of 100 µsec,” she added. “With 6G,” said Mazin Gilbert, AT&T’s VP of advanced technologies in a keynote address, “musicians will be playing together in different parts of the world.” I welcome that day.

5G latency needs improvements in computing, memory, and protocols. Computing must move from the network cloud to the edge, something that’s taking place. Access networks must become an extension of the cloud, claims Larry Peterson, CTO of ONF. Computing will need to follow you and your apps to minimize latency,” added Gilbert. Some computational power will, therefore have to move to the cell tower. Memory speeds must also increase and DDR5 will help. “Mobile devices won’t have enough computing power. We will need computing at the edge,” said Sunghyun Choi, SVP and head of Samsung’s Advanced Communications Research Center. Choi used the phrase “split computing” where computing power at the edge will complement computing in the user device. “We will need AI in the device and AI at the edge,” added John Smee, vice president of engineering at Qualcomm. “We need low-latency connectivity between user devices and the network edge.”

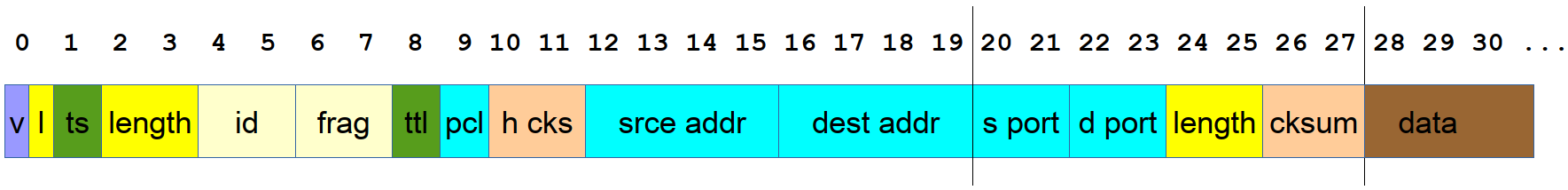

While some people claim that memory is a bottleneck to latency, others claim it’s the protocols (Figure 2) and the overhead that comes with them. “The bottleneck is in the network protocols,” claimed Cabric. “6G will have to solve latency at higher layers.” Network protocols add to latency because they need too much time to perform their tasks such as routing and resource scheduling. Protocols organize data into packets, but those packets need headers to route their payload. Internet Protocol is one such bottleneck, though an improvement is already in the works.

Figure 2. Internet Protocol’s packet overhead slows network throughput.

Latency also occurs at the physical layer, noted Prof. Muriel Médard of MIT. That’s where coding plays a role. “We need the physical layer to give us the quality of experience that we want.” Médard pointed to several coding methods, including block codes, Reed-Solomon codes, and polar codes. Averaging used to reduce noise in signals adds delay. Médard pointed to random additive noise decoding as a solution. Adam Thompson, senior solutions architect at nVidia said that 6G will need to do more at the physical layer in less time than 5G. That will require incorporating more channel data into signal processing building blocks. The models used to develop the radio physical layer won’t be adequate for 6G, added John Kaewell, senior principal at Interdigital. “6G will be a data-driven wireless technology. We need large and open data sets for channel modeling at mmWave and terahertz frequencies. Datasets that describe massive MIMO, outdoor & indoor settings should be open and available to research.”

Better, smarter, faster

Networks will need to get smarter and quickly adapt to changing traffic demands. Steve Papa, CEO of Parallel Wireless sees the end of the monolithic network while Gilbert cited the need for self-learning networks that guarantee performance. That means networks will have to rely on AI/ML and performance data to adapt to traffic needs and the radio will also need to adapt to changing conditions.

6G simply won’t happen without assistance from AI/ML. “Networks need a higher level of intelligence than we have today” said Gilbert. “Intelligence also needs to be autonomous. We are not even close to that yet.”

“AI/ML will be a new tool for wireless,” added Choi. “6G should be used with AI from the start.” Smee noted that we will need AI/ML to design the next air interface because it’s getting too complex for humans. Tim O’Shea, CTO at DeepSig and professor at Virginia Tech added “We need to turn radio designs into data-driven problems that look at end-to-end optimization of comm links.”

Engineers will also need to use AI/ML when designing the radio access network (RAN). Smee noted that 5G networks are already changing because of open RAN. That openness will be crucial in the latter stages of 5G and into 6G. “Innovation is currently locked in the baseband unit (BBU),” said Gilbert. Opening the BBU and running software on commodity hardware will encourage the development of services closer to the user, reducing latency and creating new services and use cases. Gilbert sees open interfaces as the key to reaching microsecond latency needed to achieve the service-level agreements (SLAs) that users will demand. “Most networks are built on best effort, not on guarantees.”

Sharing is key

Although the 6G Symposium speakers emphasized AI/ML everywhere, wireless networks still need radio spectrum to operate. Most participants argued that even more spectrum is needed to keep up with demand and to bring new experiences to consumers and businesses.

Where will the additional spectrum come from? Some will come from going beyond today’s mmWave frequencies and into those above 100 GHz. Keysight’s Roger Nichols went so far as to mention terahertz frequencies. While that will help with delivering the 10 GHz data rates that some envision, such frequencies suffer from coverage issues. Thus, there’s still great demand for spectrum at lower frequencies. That’s where spectrum sharing comes in.

DARPA program manager John Davies claims we need better spectrum reuse. Furthermore, today’s technologies and usage rules won’t work for 6G. For example, although the FCC opened the 3.5 GHz band for commercial use, new users must protect the Navy’s radar from interference and relinquish the channel on demand. That spectrum sharing relies on a database to prioritize usage, which takes time and increases latency. “We need dynamic spectrum sharing based on AI/ML,” said Monisha Ghosh CTO at the FCC. The database method is already outdated.”

Because spectrum below 10 GHz is scarce and needs better spectrum sharing, everyone fights for the rights to use 600 MHz to 6 GHz. The FCC has opened the 6 GHz band for unlicensed use and there’s talk of making spectrum available up to 24 GHz with spectrum sharing. “While we need mmWave, it can’t replace the coverage possible with low-band,” said Ghosh.

These topics and others dominated the 2020 6G Symposium. I expect next year’s speakers will change their tunes as we learn more from 5G deployment and from continued research. 5G certainly seems as though it’s just the beginning.

Thank you Martin for the great summary and your commentary!